Azure Machine Learning Studio - Connect Azure Data Lake Gen 2 Storage

Have a requirement to connect datasets to Azure ML for further analysis and AI modelling? We wanted to use Azure Data Lake Gen 2 storage and had to connect it directly into Azure ML Studio.

Problem

How do you connect Azure Storage (Data Lake Gen 2) to Azure ML?

Solution

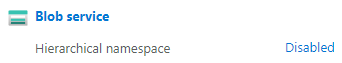

1. First, you want to ensure that the storage is Azure Data Lake Gen 2. Check your Storage account > Overview > under Properties you will see Hierarchical namespace. Ensure that it states Enabled. If it doesn't click Disabled.

2. Next, follow the steps on the page > Review and agree to changes > Start validation > Start upgrade.

Set Up Container

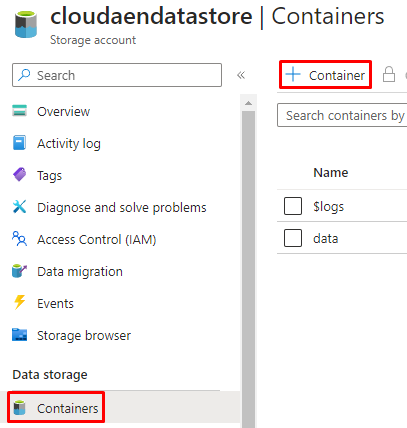

3. After you have upgraded to a storage account with Azure Data Lake Gen2 capabilities, navigate to Containers under Data storage and create a Container for your data. In this example, we created a Container called data.

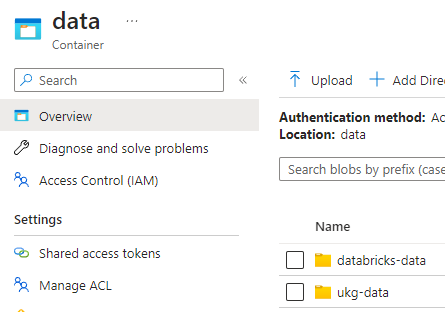

Example: Inside this container you can create directories and upload CSVs or any other data dumps.

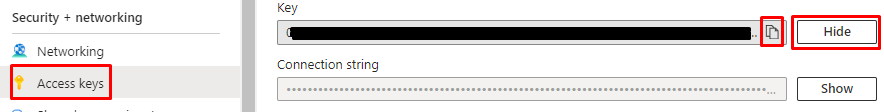

Generate Access Key

4. Now that we have our containers set up, you will need the Access Keys. Go back to your storage account > under Security + networking > Access keys > under key1 next to the Key click Show then copy the key. You will need this key in Azure ML Studio.

5. Once you have your key copied, open up your Azure Machine Learning Studio.

Connect Datastore to Azure ML Studio

6. Navigate to under Assets > Data.

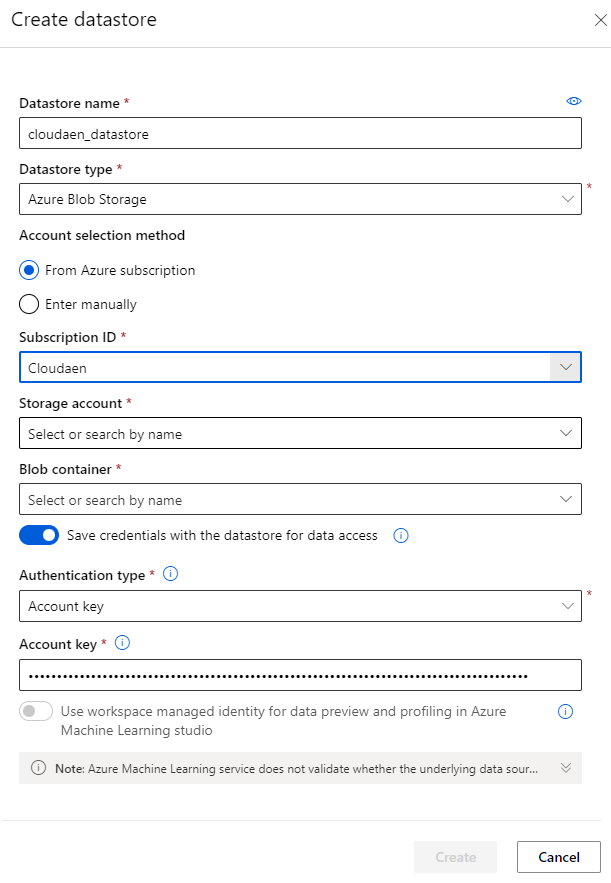

7. Navigate to Datastores tab > press + Create. Add a Datastore name then choose Azure Blob Storage > From Azure subscription > Fill out Subscription ID, Storage account, Blob container with yur storage account that you just created. Choose Authentication type as Account key and enter your key that you have copied into Account key. When you are finished click Create.

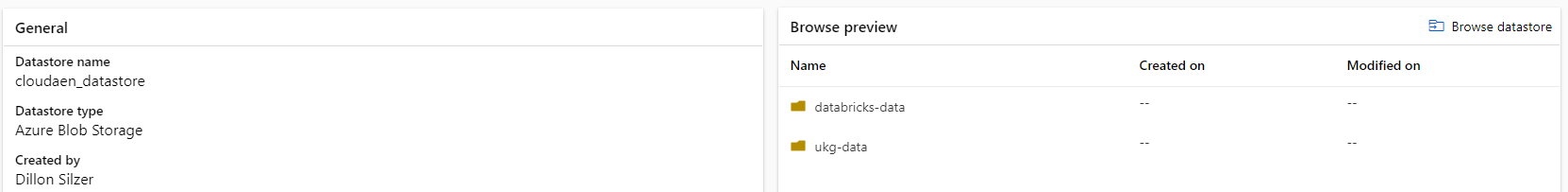

8. Your datastore is now connected. If you navigate, you should now see your directories that you created in your Container from earlier.

(Optional) Create Notebook and Query Datastore

9. (Optional) If you are interested in how to get that data from the datastore you can use the following python code:

from azureml.core import Workspace, Dataset, Datastore

import pandas as pd

subscription_id = 'your-subscription-id'

resource_group = 'cloudaen-ai'

workspace_name = 'cloudaen-ai-workspace'

workspace = Workspace(subscription_id, resource_group, workspace_name)

datastore = Datastore.get(workspace, "cloudaen_datastore")

dataset = Dataset.Tabular.from_delimited_files(path=(datastore, 'databricks-data/example.csv'))

df = dataset.to_pandas_dataframe()From here, if you were to display(df) you should see your csv data.

Summary

As we move more towards using Machine Learning, we need the necessary infrastructure to access data within our cloud environments. This article is meant to help developers and data analysts get their environment set up and access necessary data storage and information within Azure Data Lake Gen 2 storage.