Azure Databricks - Variables from Azure Data Factory

Are you seeking to integrate variables from Azure Data Factory into your Databricks notebooks? This blog provides a solution for pushing variables from ADF directly into your Databricks environment.

Problem

Utilizing variables from Azure Data Factory in Azure Databricks notebooks can be achieved through a straightforward process. However, a common challenge arises when attempting to access these variables directly in a Python notebook using variable=dbutils.widgets.get("variable"). Without the appropriate setup, the notebook might fail to recognize the source of this information. To address this, consider implementing the following solution.

Solution

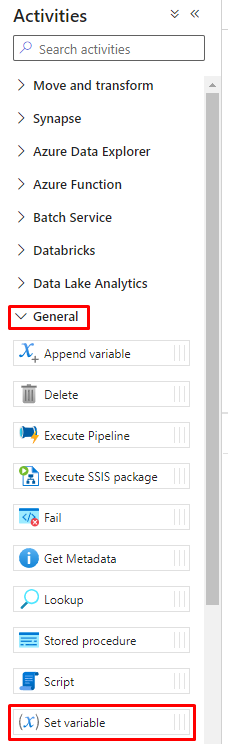

1. Start off by creating an activity in Azure Data Factory by going to Activities > General > Set variable:

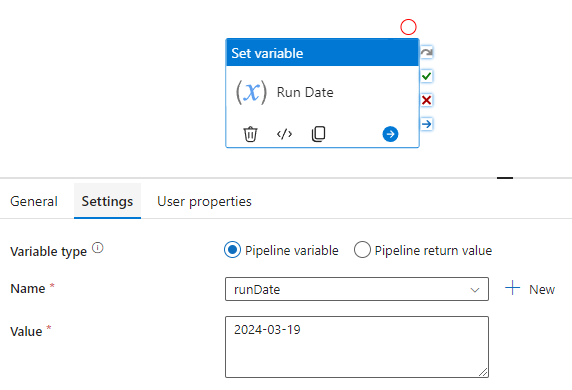

2. Set your variable value in the Settings (i.e. runDate and 2024-03-19 as the value):

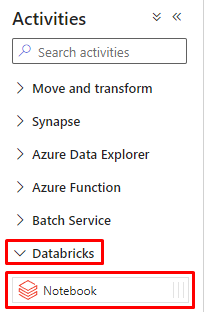

3. Create a Databricks Notebook from Activities > Databricks > Notebook:

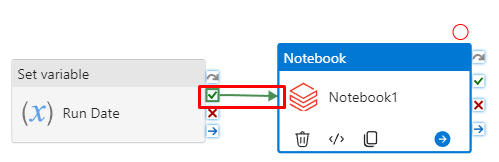

4. Set up an on success that connects the Set variable and Notebook:

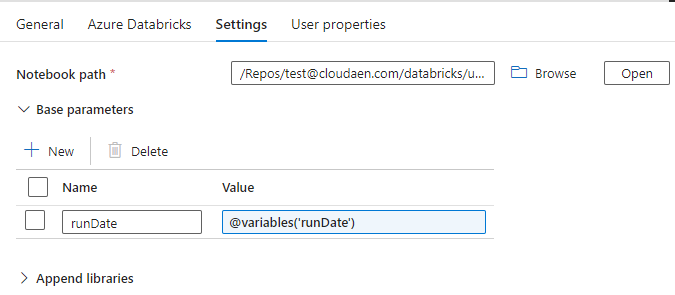

5. Click on the Notebook and go to Settings. Add your Databricks Notebook path and then expand Base parameters.

Press + New and type your variable (i.e. runDate for name and the value as @variables('runDate')):

6. Once you have completed connecting your Notebook from ADF, add the following command in your python Notebook to get the variable from the ADF pipeline:

runDate=dbutils.widgets.get("runDate")When you now run the Azure Data Factory pipeline, your Notebook logs should show that runDate was equal to your date (i.e. 2024-03-19). To confirm this you can do a simple print(runDate) in the same Notebook.